Overview

Imagine that you have to walk form place to place without seeing. How confident are you that you can walk without bumping into things and hurting yourself? This is what blind people have to do every day with their white canes. However, since white canes have to stay low at all times, there are obstacles they cannot detect, e.g. table tops and street signs. With that problem, we came up with HitAlert, a collision alert system for the visually impaired.

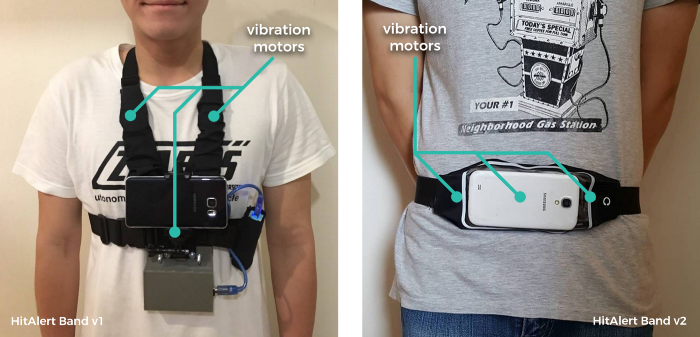

HitAlert is a system for the visually impaired to help them walk more safely and confidently by alerting them of collision risks. The app does the task by analyzing video from a smartphone’s camera in real-time. This project also included a Bluetooth-powered belt “HitAlert Band” which give app users richer and easier-to-understand haptic feedback for signaling collision threats.

Awards

- 2nd Prize in Assistive Technology Category, the 19th National Software Contest (This award can actually be considered as the winner of category since there is no 1st prize winner in this category.)

- and Thailand Representative Technology Category, Global Student Innovation Challenge for Assistive Technology (gSIC-AT)

Tools Used

- OpenCV for Android The open library for computer vision and image processing

- Android Studio IDE for Android app development

- Arduino Used to create “HitAlert Band” a peripheral that works with the app to provide a more intuitive feedback.

The Process

We followed an iterative process in our workflow with the target user group — the blind — helping us test our product while we improve. We started by talking to visually impaired people to gathering requirements and discover design constraints. We have learned about screen readers, how they use them, and how apps can be accessible. After we had created a prototype, we also test it with multiple visually impair people we had access to from various sources and gather feedback to improve our product.

The Design

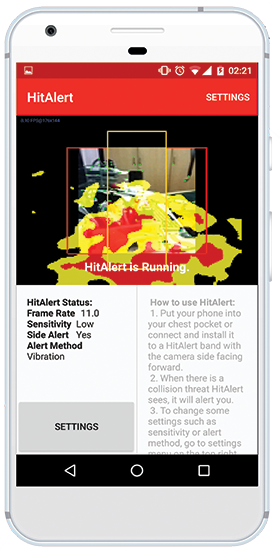

Software

HitAlert app is the main part of the system. It is designed with accessibility in mind. The items on the screen can be reached by screen readers. These include:

- A status message “HitAlert is Running”

- Custom configuration and Settings button

- Instruction message

- The screen is divided into sections in case a user might want to navigate it by rubbing over the screen.

User interface components used in the screen are all native Android UI elements. This is to ensure accessibility of the app.

For the alerting part of the app, the user can select from a number of options for alerting method including:

- Vibration: The phone vibrates when there is an incoming collision thread. This mode is the most useful when the app is running when the phone is placed in user’s shirt pocket.

- Sound (Beeps): Different kinds of beeps are used to signify different kinds of threats i.e. direction of obstacle and probability of collision. This mode can be timely and informative. However, it can require some time and training to learn and get used to.

- Sound (Spoken Words): Sound cues e.g. “On your left!” will be played when the app detects an obstacle. This can be easier to learn, but it is not the fastest feedback.

- Stereo Sound: Instead of using different sounds for different direction, in this mode, we utilize the left and right channels of headphones. This is intended for using with non-ear-blocking headphones e.g. bone-conducting headphones.

- Bluetooth: Instead of using the phone to issue an alert to the user directly, the app sends Bluetooth signals to paired peripherals.

Hardware

We also built our own Bluetooth peripheral for the app called HitAlert Band. This device connects to HitAlert app, receives alert signal, and vibrates in different areas and with different intensities to signify each type of collision threats. We created a prototype and tested it with our user group. Primarily, the first prototype was to heavy, uncomfortable to wear, and too high-profile. We then redesigned it according to the feedback we get from the testing.